The inconvenient convenience of translator glasses

On one family vacation, my daughter bought tickets for what she casually described as “a Korean play with subtitles.” Before I could ask where the subtitles would appear, she added that we’d have to wear special glasses, like in an IMAX theater.

As someone firmly on Team Sub (as opposed to Team Dub)—a position I’ve defended for years, especially since my wife and I disappeared into the K-drama rabbit hole—I was immediately intrigued by the technology. And because it was Korea, I was fairly certain it wouldn’t disappoint.

The show itself, Inconvenient Convenience Store, is based on Kim Ho-yeon’s bestselling novel Second Chance Convenience Store—a warm, episodic story centered on a small convenience store in Cheongpa-dong and the quietly complicated lives that drift through it. At its heart is Dok-go, a homeless man with memory loss who becomes an unlikely employee at the Always Convenience Store. Through his interactions with the store’s regulars, Dok-go changes lives in his own distinct way: funny, humane, and deceptively simple—exactly the kind of storytelling that thrives in Korea’s theater district, where the production has been running steadily since 2023. (Kim Ho-yeon will be in Manila this September as a guest and speaker at the Manila International Book Festival.)

All of that would already have been enough. But then came the glasses.

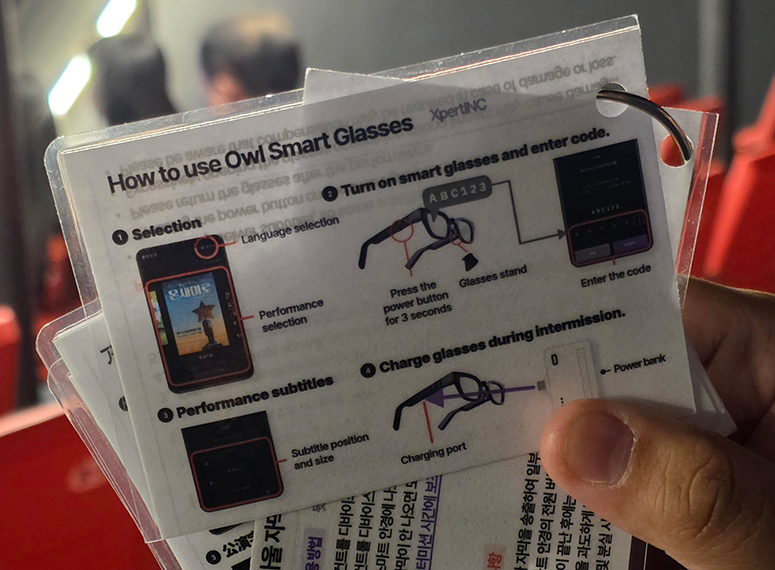

The proper term for what we were handed is something like AI-powered AR subtitle glasses—a subset of augmented reality smart glasses that use speech recognition and translation to display text directly within your field of vision. In practice, they pair with a smartphone (typically via Bluetooth or Wi-Fi), which handles the heavy lifting: processing audio, generating translations in real time, and streaming subtitles back to the lenses almost instantly. The interface still needs refinement—there is the occasional lag between performance and translation, and certain nuances inevitably get lost—but the potential for entertainment is immense.

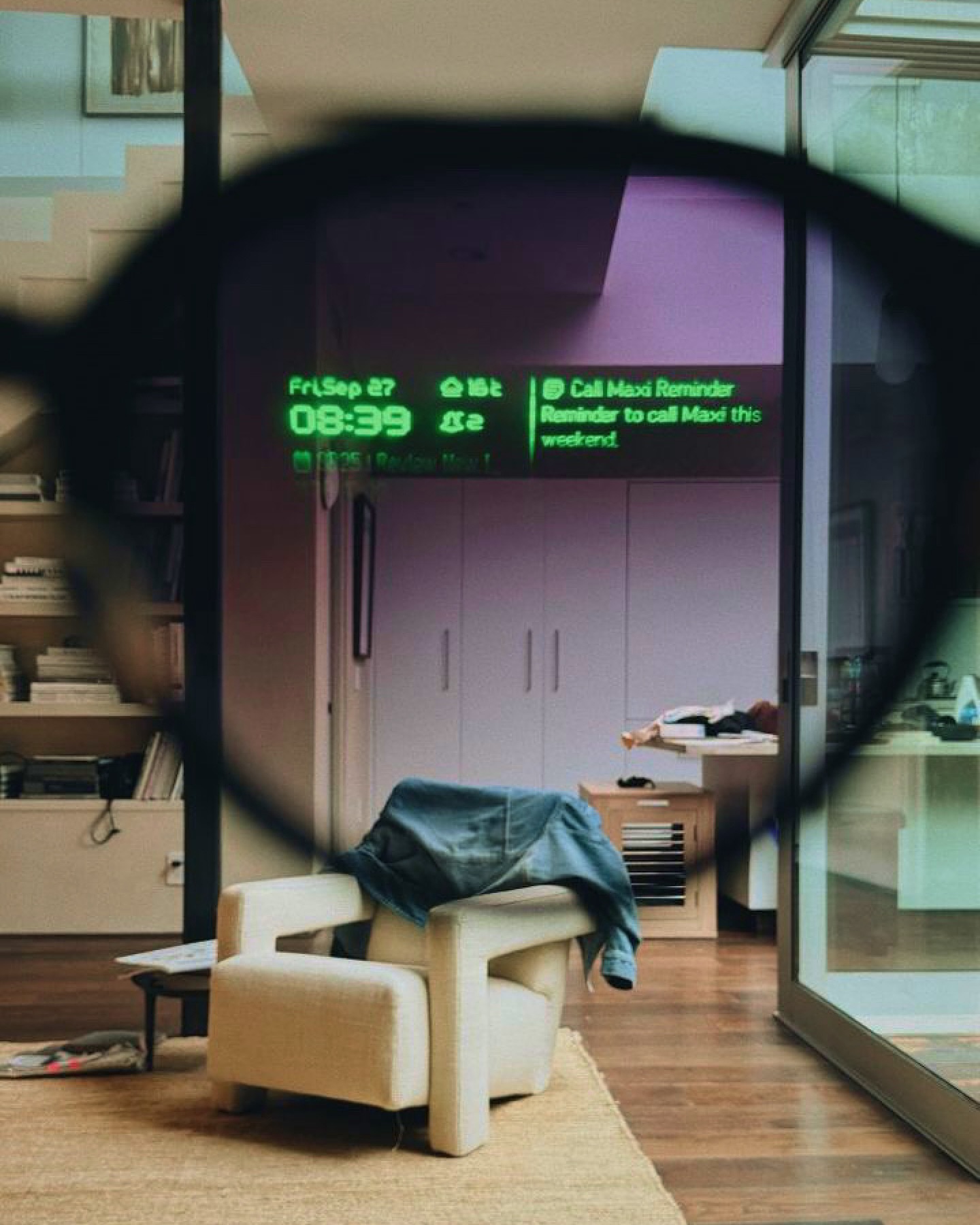

What you see isn’t exactly a screen, nor quite “text” in the traditional sense, but something closer to a polite overlay on reality: floating dialogue that appears precisely when and where you need it, then disappears before overstaying its welcome.

Crucially, the system is untethered from anything except a smartphone.

Anyone who has spent years watching subtitled films and dramas develops a certain rhythm—eyes flicking up and down, left to right, constantly moving between image and caption. It’s a small but relentless act of coordination. These glasses end that dance.

The subtitles no longer sit obediently at the bottom of a stationary screen. Instead, they follow you around like a loyal dog. They behave, uncannily, like sound itself—ambient, portable, impossible to leave behind. The effect is subtle at first, then very quickly revolutionary.

AR subtitle glasses detach language from place, turning dialogue into something ambient, portable, and impossible to leave behind.

Once dialogue is no longer anchored to a screen, neither are you. In theory, you could leave your seat, step into the hallway, or even go to the toilet (after all, the one-act play runs close to two hours) and continue “reading” the performance in real time. You would miss the actors, of course—which theater purists would correctly argue is the entire point—but the narrative itself would remain intact, faithfully scrolling along. Unlike traditional closed captions, which demand your constant visual attention, these subtitles stay with you even when your eyes drift away from the stage.

AR subtitle glasses detach language from place. They turn dialogue into something closer to a live feed—less like reading and more like receiving, less like wired headphones and more like seamless Bluetooth audio.

And yet, for all this technological ambition, the glasses themselves are almost disappointingly ordinary, especially compared to the famously awkward 3D glasses of old. It feels like science fiction made mundane—as casually believable as universal language translators worn in the ear.

Of course, the gear itself—glasses and smartphone neatly packed in a sleek case—could easily tempt sticky-fingered theatergoers. That’s when you realize you’re in a first-world country: no one stood by the exit to check whether the devices were returned, something that would likely be a genuine concern elsewhere.

The physical ordinariness of the glasses is matched by the restraint of the technology itself. It never overshadows the performance; if anything, it restores and enhances it. Foreign audiences once had to choose between simply watching actors emote onstage while hearing dialogue as unintelligible as High Valyrian, or reading the book beforehand to follow the story. With these glasses, you can finally do both—fully and comfortably, without compromise. It’s easy to imagine how transformative this technology could be for Filipino productions performed in regional dialects before international audiences.

Which brings me, somewhat reluctantly, to a personal reckoning.

I could argue endlessly that subtitles preserve original performances in ways dubbing never can. But I had also long accepted their limitations: the darting eye movements, divided attention, and occasional missed details.

These glasses remove those limitations. They liberate subtitles instead of confining them.

By the end of the performance, I realized something slightly unsettling: this may well be the future not only of theater, but of language itself. Language is no longer something we chase across a screen, but something that arrives effortlessly, wherever we happen to be.

Which leaves one final, slightly inconvenient thought: if subtitles can now follow me out of the room, I may soon find myself “watching” my favorite shows while washing the dishes—or even the car.