I asked AI to express human feeling, and this is what I got

It looks like we are starting to live in a sci-fi movie. With all these artificial intelligence (AI) chat apps available, pretty soon, we will rely on this technology for businesses, entertainment, design, and even art. But is it a bad thing? Will it take over the world and render us mindless beings wholly dependent on computer interaction?

Speaking of AI, I’d like to focus on the fascinating ChatGPT, which according to ZDnet is “a natural language processing tool driven by AI technology that allows you to have human-like conversations and much more with the chatbot. The language model can answer questions and assist you with tasks, such as composing emails, essays, and code.”

This will certainly make the work of marketers, researchers, coders and others so much easier. Some companies already have chatbots to attend to their customers, but it’s so basic and not on the same level as ChatGPT. Furthermore, ChatGPT can also be used by writers, journalists and social media influencers. It can write your letters, essays, summarize content, create a résumé, and more—which to me is a bit unnerving. Where does authenticity belong in an AI world? Does AI have a soul? Am I getting too philosophical over technology?

Using ChatGPT may also have legal consequences as it may “pull” data from a copyrighted work. If emotional or medical support is needed, it is not appropriate.

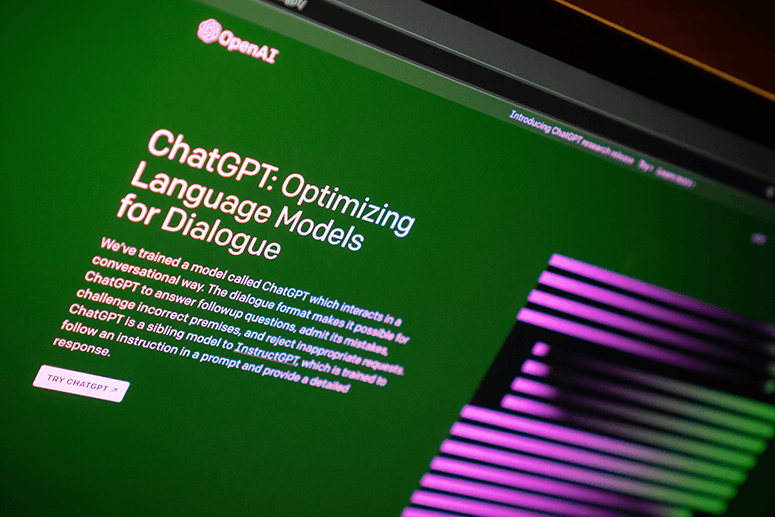

ChatGPT was developed by OpenAI and launched in November 2022. Elon Musk, who was one of the founders of the company (he left in 2018), said: “ChatGPT is scary good. We are not far from dangerously strong AI.” ZDnet further states: “People are expressing concerns about AI chatbots replacing or atrophying human intelligence.

For example, the chatbot can write an article on any topic efficiently (though not necessarily accurately) within seconds, potentially eliminating the need for a human writer.” There you go: it has a built-in flaw. It is not always accurate and all-knowing since its data is limited to 2021 onwards. Sometimes, the output doesn’t make sense. The tech world calls these inaccuracies “hallucinations.”

A New York Times article, When AI Chatbots Hallucinate, raises the fear that “people will rely too heavily on these systems for medical and legal advice and other information they use to make decisions.” It explains further, “the technology, called generative A.I., relies on a complex algorithm that analyzes the way humans put words together in the internet. It does not decide what is true and what is not.”

Furthermore, some schools in the US have blocked ChatGPT and other apps due to their potential for cheating (it can quickly write a term paper!). Despite that, “according to analysis by Swiss bank UBS, ChatGPT is the fastest-growing app of all time. The analysis estimates that ChatGPT had 100 million active users in January, only two months after its launch. For comparison, it took nine months for TikTok to reach 100 million users.”

Other similar products in the market are: Chatsonic, GPT-3 Playground, YouChat, and Bing AI Chat.

I decided to try ChatGPT by visiting their website (chat.openai.com) and creating an OpenAI account. I opted for the free version for this experiment, which is good enough. One can also upgrade and pay $20 or more a month, and get extra perks.

Since I was curious if technology can express feelings, compassion, or empathy, I sent this message (or “prompt”): “Provide me with a 20-sentence write-up on how it feels to lose a loved one in the first person.” This is what ChatGPT sent me.

“Losing a loved one is an indescribable feeling. At first, it’s like a punch in the gut that takes your breath away. It feels like my world has been shattered into a million pieces. The shock of the loss is hard to process, and I find myself in a state of disbelief, thinking that this can’t be real.

As time goes on, the reality of the situation starts to sink in, and the pain becomes more intense. I feel like there’s a hole in my heart that can never be filled. I find myself constantly thinking about the person I lost, replaying memories in my head and wondering what could have been different.

Emotions come in waves, and I find myself cycling between sadness, anger, and numbness. There are times when I feel like I’m barely holding it together, and other times when I can’t stop crying. I feel like I’m on an emotional rollercoaster that I can’t get off of.

The physical symptoms of grief are also present, with exhaustion and loss of appetite being the most prominent. It’s hard to concentrate on anything and making decisions feels impossible. The world around me feels like it’s moving too fast, and I can’t keep up.

As time goes on, I start to feel a sense of emptiness and loneliness. Everything reminds me of the person I lost, and it’s hard to escape the pain. I find myself withdrawing from social situations and isolating myself from others. There are times when I feel like I’m starting to heal, but then something happens that triggers the pain all over again. The grief never really goes away, but it does get easier to live with over time.

Ultimately, losing a loved one is a deeply personal and unique experience. It’s something that changes you forever and teaches you to appreciate the people in your life even more.”

Amazing, right? If I hadn’t known it was written by a chatbot, I would really think it was from a human being. Is there something missing, though? I think it’s the individuality or color of a person, especially if you know him/her—the words they normally use, their tone, their voice. It’s subtle.

So is this highly evolved human-mimicking AI-powered chatbot good or bad?

Many professionals, such as marketers, programmers, educators, students, researchers, copywriters, doctors, scientists, journalists and others can use ChatGPT to enhance their work and help with time management. It will also improve customer service satisfaction. Plus, it is accessible and easy to sign up for an account.

On the downside, it uses limited data and could contain inaccuracies. Some information can be taken from misguided sources on the internet as well. Then there are the ambiguous “hallucinations.”

Using ChatGPT may also have legal consequences as it may “pull” data from a copyrighted work. If emotional or medical support is needed, it is not appropriate. It is not to be used for counselling, nor can it understand nuances in conversation.

Bill Gates stated that “ChatGPT is one of the two technological advancements he has seen that struck him as revolutionary in his lifetime. It will change the way people work, learn, travel, get health care and communicate with each other. Entire industries will reorient around it. Businesses will distinguish themselves by how well they use it.”

I partially agree because I love it when technology helps make life easier—but when it comes to feeling, there’s nothing like the human touch.